troubleshooting BSD TCP network performance: part 2 (fixing NetBSD)

This is a followup of a previous article linked here.

In the previous article I played around with some BSDs and measured their TCP and HTTP speeds over long distances to see if they fit my use case.

I was mostly satisfied with the result except for NetBSD. After some digging I was able to find the issue and nudge it towards competitive speeds:

Not only that, but I found out that it can actually hit pretty big numbers if properly tuned, which I'll show below.

Maybe kind of unsurprisingly, most of it had to do with the VirtIO driver, which was not only affecting NetBSD, but all of them.

Index

- Overview

- Previous NetBSD Speeds (VirtIO)

- Switching NetBSD to E1000

- Switching everything to E1000

- Tuned performance on E1000

- SCP trouble

- TCP stack patch

- My personal take

Overview

I've been very fixated on the BSDs lately. A week ago I tried installing them on every VM I could find, and using them was a breeze, except for one important detail: network speeds over high-latency and congested WAN.

In the previous article I dug a bit into testing TCP, UDP and HTTP speeds, TCP being particularly sensitive to congestion. After some tuning I ended up with pretty satisfying results, except for NetBSD.

NetBSD showed deplorable performance no matter where I installed it nor what knobs I turned. Until I was able to find the culprit.

Previous NetBSD Speeds (VirtIO)

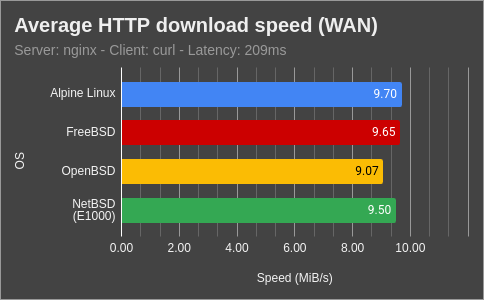

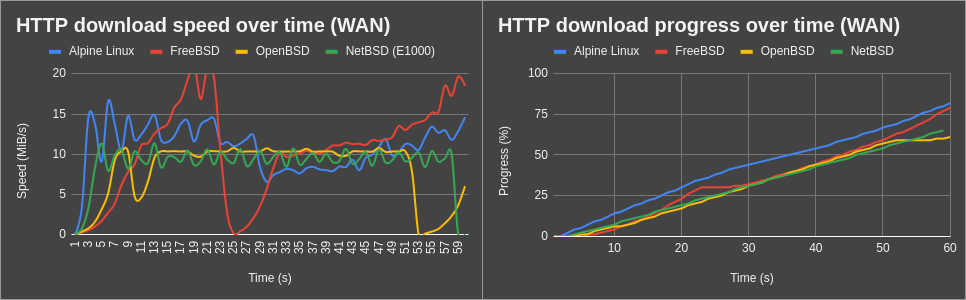

The best performance I could previously get from NetBSD (green), coming from the previous article, was this:

This is with NetBSD with default settings installed on a KVM VM using VirtIO as its virtual network interface. You can see NetBSD crawling at around 900 KiB/s. No matter how much I increased the TCP buffer sizes, it just wasn't able to reach higher speeds.

Switching NetBSD to E1000

I don't really need high speeds for my usage, but capping at 900 KB/s wasn't acceptable for me. This is why I spent a full week reading about NetBSD sysctl tunables, trying different values and asking around in their mailing list.

Until an anonymous user told me: "Have you tried E1000?" - "No way", I said. UDP speeds are totally fine, the VirtIO interface is working as it should. But I was desperate so, sure. Why not.

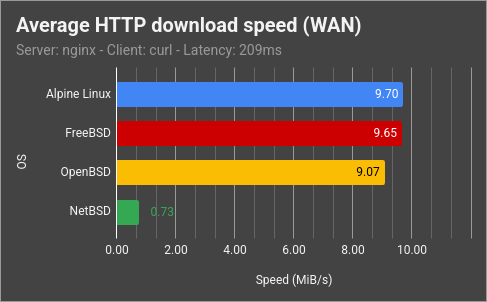

I was not expecting the result:

NetBSD speeds were immediately fixed. Using similar buffer sizes, it wasn't any slower than the other BSDs, or Linux. Don't ask me why, though.

Switching everything to E1000

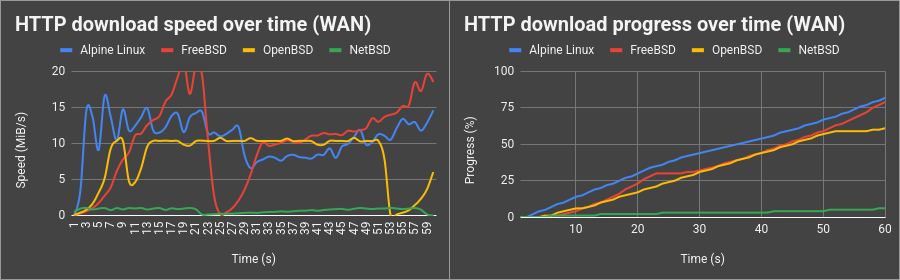

Now that I was into it, I decided to switch every OS to E1000 as well. At this point I also wasn't expecting that all OSes have their TCP performance affected negatively by VirtIO in my environment.

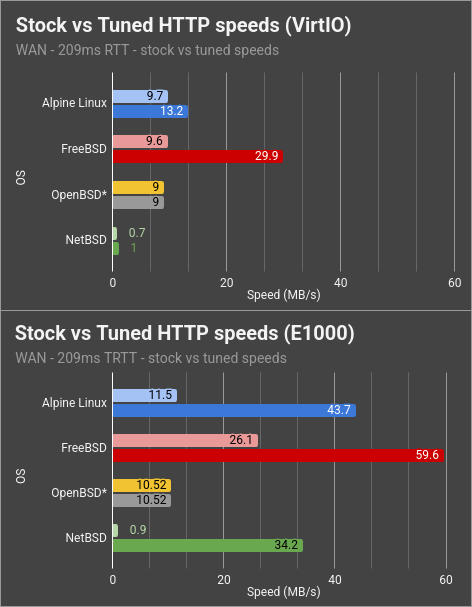

Here I made a comparison between VirtIO and E1000, showing both untuned and tuned settings. You can see that they all improve on E1000, especially NetBSD which was pretty much crawling on VirtIO:

Yes, NetBSD can reach 35 MB/s no problem.

Tuned performance on E1000

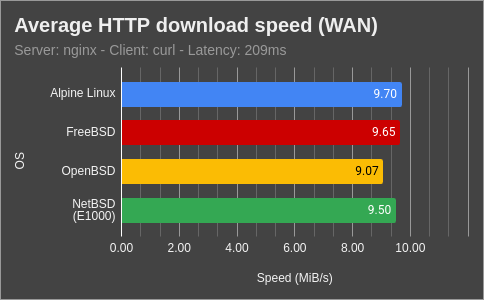

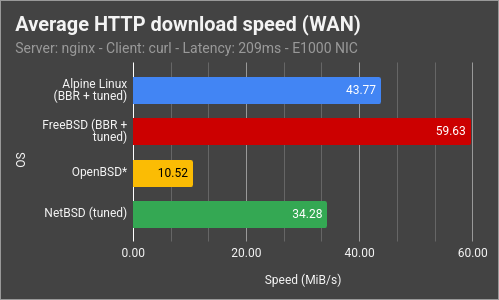

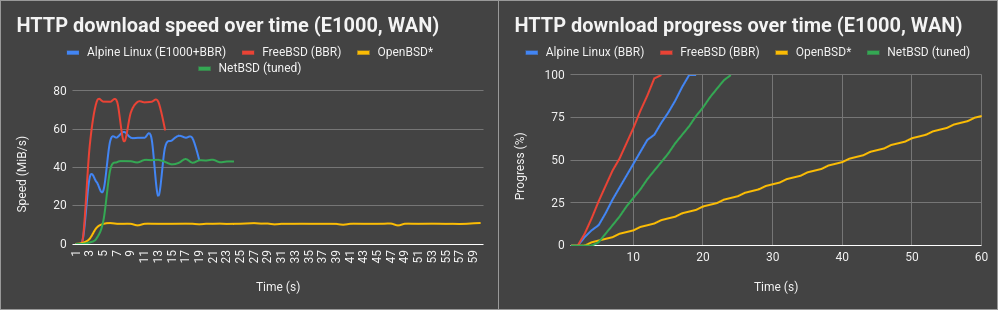

Once I realized that everything performs better on E1000, I decided to tune the buffer sizes of all of them as well. Here's the result:

* Note: OpenBSD does auto-tuning internally and doesn't expose buffer sizes for modification

- FreeBSD can now reach even more impressive values with its BBR congestion algorithm.

- Linux also improves a lot, even though I had expected it to beat FreeBSD.

- OpenBSD for some reason doesn't want to go further than 10 MB/s - I wish it had some tuneables for this. Maybe it does but I'm not aware of them.

- NetBSD does pretty well now too. Although it's the most sensitive to congestion in general.

Values used here:

Linux (sysctl.conf)

FreeBSD (sysctl.conf)

NetBSD (sysctl.conf)

SCP trouble

After being happy with this result, I decided to transfer some files with scp

to my NetBSD server. Only to find this:

SCP transfer would be extremely slow. After hitting my head against the keyboard for a while, I found the fix:

- Make sure you use a big enough recvspace

- If you use NetBSD's sshd, enable HPN (set

HPNDisabled noinsshd_config) - Or alternatively, use upstream OpenSSH (security/openssh)

Then all was right with the world:

TCP stack patch

I was also pointed to a patch for the TCP stack of the NetBSD kernel by Michael van Elst, which could improve the recovery times that come from retransmission after packet loss. You can find the patch here.

My personal take

I'm very happy now that NetBSD can reach great speeds and I can't wait to use it in production. It quickly became one of my favorite operating systems.

Regarding speeds; personally, I don't need that much throughput. What I noticed is that increasing the buffer sizes too much makes the speeds somewhat unstable, so I'm fine with lowering them a bit.

I'm not sure why VirtIO performs generally worse - it shouldn't, as it's a paravirtualized driver. Maybe it has to do with a misconfiguration of the nodes I tried them on, although they were two different providers.

NetBSD is particularly hit hard by it, though. I might end up opening a problem report

for that. The sshd thing was also a bit weird, which is something I mentioned in their

mailing lists as well.

Nonetheless, I think they're all fine for my usecase. I run a mail server, web servers, XMPP, and Matrix, along with other services that really don't actually need high throughput. For that reason I feel safe moving my servers to the BSDs soon. I'll post a maintenance notice when I'm ready to do the migration.

Thanks to all the friendly people over at the netbsd-users@ mailing list - your support and knowledge were essential.

If I missed anything, please feel to contact me, preferibly by e-mail.